When considering whether or not to shift your existing SQL workloads to a single database offering on Azure SQL, one of the major pros is the breadth of capabilities the service can offer when compared with other vendors or in comparison to SQL Server on an Azure Virtual Machine. A list of these may include:

- High feature parity with the latest on-premise SQL Server offering.

- Built-in support for Enterprise product features, such as Transparent Database Encryption.

- Security management features, such as firewalls and (optional) integration with Azure SQL Database Threat Detection for proactive monitoring.

- Ability to quickly scale a database from a 2GB database with low CPU consumption to a mammoth 4TB database, with a significant pool of CPU/memory resources to match.

It is the last one of these that makes Azure SQL database a particularly good fit for web application deployments that have unpredictable user loads at the time of deployment or, as we have seen previously on the blog, when you are wanting to deploy out a LOB reporting database that houses Dynamics 365 Customer Engagement instance data. Administrators can very straightforwardly scale or downscale a database at any time within the portal or, if you are feeling particularly clever, you can look to implement automatic scaling based on Database Throughput Unit (DTU) consumption. This can aid towards making your query execution times as speedy as possible.

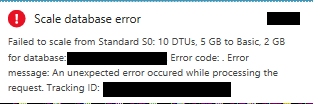

Database scaling, I have found, is very straightforward to get your head around and works like a charm for the most part…except, of course, when you get rather cryptic error messages like the one demonstrated below:

I got this error recently when attempting to scale an S0 5GB database down to Basic 2GB tier. To cut a long story short, I had temporarily scaled up the database to give me increased DTU capacity for a particularly intensive query, and wanted to scale it back to its original pricing tier. You can perhaps understand my confusion about why this error was occurring. After further research and escalation to Microsoft, it turns out that the database was still consuming unused disk space on the platform, thereby violating any size limits imposed by moving to a lower price tier. To resolve the issue, there are some tasks that need to be performed on the database to get it into a “downscale-ready state”. These consist of a series of T-SQL scripts, which I would caution against using if the database is currently in use, due to potential performance impacts. If you have found yourself in the same boat as me and are happy to proceed, the steps involved are as follows:

- To begin with, the script below will execute the DBCC SHRINKDATABASE command against the database, setting the database file max size to the value specified on the @DesiredFileSize parameter. The script is compiled so as to perform the shrinking in “chunks” based on the value of the @ShrinkChunkSize parameter, which may be useful in managing DTU consumption:

SET NOCOUNT ON

DECLARE @CurrentFileSize INT, @DesiredFileSize INT, @ShrinkChunkSize INT, @ActualSizeMB INT,

@ErrorIndication INT, @dbFileID INT = 1, @LastSize INT, @SqlCMD NVARCHAR(MAX),

@msg NVARCHAR(100)

/*Set these values for the current operation, size is in MB*/

SET @DesiredFileSize = 2000 /* filesize is in MB */

SET @ShrinkChunkSize = 50 /* chunk size is in MB */

SELECT @CurrentFileSize = size/128 FROM sysfiles WHERE fileid = @dbFileID

SELECT @ActualSizeMB = (SUM(total_pages) / 128) FROM sys.allocation_units

SET @msg = 'Current File Size: ' + CAST(@CurrentFileSize AS VARCHAR(10)) + 'MB'

RAISERROR(@msg,0,0) WITH NOWAIT

SET @msg = 'Actual used Size: ' + CAST(@ActualSizeMB AS VARCHAR(10)) + 'MB'

RAISERROR(@msg,0,0) WITH NOWAIT

SET @msg = 'Desired File Size: ' + CAST(@DesiredFileSize AS VARCHAR(10)) + 'MB'

RAISERROR(@msg,0,0) WITH NOWAIT

SET @msg = 'Interation shrink size: ' + CAST(@ShrinkChunkSize AS VARCHAR(10)) + 'MB'

RAISERROR(@msg,0,0) WITH NOWAIT

SET @ErrorIndication = CASE

WHEN @DesiredFileSize > @CurrentFileSize THEN 1

WHEN @ActualSizeMB > @DesiredFileSize THEN 2

ELSE 0 END

IF @ErrorIndication = 1

RAISERROR('[Error] Desired size bigger than current size',0,0) WITH NOWAIT

IF @ErrorIndication = 2

RAISERROR('[Error] Actual size is bigger then desired size',0,0) WITH NOWAIT

IF @ErrorIndication = 0

RAISERROR('Desired Size check - OK',0,0) WITH NOWAIT

SET @LastSize = @CurrentFileSize + 1

WHILE @CurrentFileSize > @DesiredFileSize /*check if we got the desired size*/ AND @LastSize>@CurrentFileSize /* check if there is progress*/ AND @ErrorIndication=0

BEGIN

SET @msg = CAST(GETDATE() AS VARCHAR(100)) + ' - Iteration starting'

RAISERROR(@msg,0,0) WITH NOWAIT

SELECT @LastSize = size/128 FROM sysfiles WHERE fileid = @dbFileID

SET @sqlCMD = 'DBCC SHRINKFILE('+ CAST(@dbFileID AS VARCHAR(7)) + ',' + CAST(@CurrentFileSize-@ShrinkChunkSize AS VARCHAR(7)) + ') WITH NO_INFOMSGS;'

EXEC (@sqlCMD)

SELECT @CurrentFileSize = size/128 FROM sysfiles WHERE fileid =@dbFileID

SET @msg = CAST(getdate() AS VARCHAR(100)) + ' - Iteration completed. current size is: ' + CAST(@CurrentFileSize AS VARCHAR(10))

RAISERROR(@msg,0,0) WITH NOWAIT

END

PRINT 'Done'

- With the database successfully shrunk, verify that the size of the database does not exceed your target @DesiredFileSize value by running the following query:

SELECT * FROM sys.database_files

SELECT (SUM(reserved_page_count) * 8192) / 1024 / 1024 AS DbSizeInMB

FROM sys.dm_db_partition_stats

- Although by this stage, the database file sizes should be underneath 2GB, the maximum size of the database is still set to match the pricing tier level. To fix this, execute the following script, substituting the name of your database where appropriate:

ALTER DATABASE MyDatabase MODIFY (MAXSIZE=2GB)

You can confirm that this command has been executed successfully by then running the following query and reviewing the output:

SELECT CAST(DATABASEPROPERTYEX ('MyDatabase', 'MaxSizeInBytes') AS FLOAT)/1024.00/1024.00/1024.00 AS 'DB Size in GB'

- With the above commands executed, you are now in a position to scale down your database without issue. There are a few ways this can be done but, as you likely already have SQL Server Management Studio or similar open to run the above queries, you can modify the tier of your database via this handy script:

--Scaling down to Basic is easy, as there is only one Max Size/Service Level Objective

--Therefore, just specify Edition

ALTER DATABASE MyDatabase MODIFY (EDITION = 'Basic');

--For other tiers, specify the size of the DB.

--In this example, we are scaling down from Premium P1 1TB to Standard S2 250GB tier

ALTER DATABASE MyDatabase MODIFY (EDITION = 'Standard', MAXSIZE = 250 GB, SERVICE_OBJECTIVE = 'S2');

Although the script will likely execute immediately and indicate as such in any output, the actual scaling operation on the backend Azure platform can take some time to complete - usually about 5-10 minutes for lower sized databases.

Whilst I was relieved that a workaround was available to get the database scaled down correctly, it would have been useful if the above error message was signposted better or if there was some kind of online support article that detailed that this could be a potential issue when moving a database between various pricing/sizing tiers. Hopefully, by sharing the above steps, others who in the same boat can very quickly diagnose and resolve the issue without hammering your credit card with increased database usage charges in the process. 🙂