As you can probably tell from my recent post history (and from the talks I have had the pleasure of delivering so far this year), I am really into Azure Data Factory (ADF) in a massive way at the moment. It really can provide a lot of benefits for organisations who have a particular focus on DevOps and cost-optimisation and, what’s more, the product is fully compatible with Dynamics 365 Customer Engagement. Just be aware that you might need to devote some towards defining your entity mapping schemas and in putting in place creative solutions to handle some field mapping limitations. And, as a cloud solution that is continually evolving by Microsoft, it is not unreasonable to expect the occasional bug, gremlin or bizarre feature limitation, that you could not have anticipated would be present. However, I can say with some degree of confidence that the product is in a stable state for serious consideration as part of your next data integration project.

A good example of the types of roadblocks alluded to in the previous paragraph can be found in a recent issue myself, and a colleague was having with a particular Copy Activity within ADF that I had authored. Upon execution, it would always throw the following error message whenever it was called from within our pipeline:

{

"errorCode": "2200",

"message": "ErrorCode=UserErrorSqlBulkCopyInvalidColumnLength,'Type=Microsoft.DataTransfer.Common.Shared.HybridDeliveryException,Message=SQL Bulk Copy failed due to received an invalid column length from the bcp client.,Source=Microsoft.DataTransfer.ClientLibrary,''Type=System.Data.SqlClient.SqlException,Message=The service has encountered an error processing your request. Please try again. Error code 4815.\r\nA severe error occurred on the current command. The results, if any, should be discarded.,Source=.Net SqlClient Data Provider,SqlErrorNumber=40197,Class=20,ErrorCode=-2146232060,State=1,Errors=[{Class=20,Number=40197,State=1,Message=The service has encountered an error processing your request. Please try again. Error code 4815.,},{Class=20,Number=0,State=0,Message=A severe error occurred on the current command. The results, if any, should be discarded.,},],'",

"failureType": "UserError",

"target": "IncrementalCopyActivity"

}

The activity in question was reasonably straightforward and derived from Microsoft’s example on how to work with change tracking data within SQL, and we were using the following query to get our data from our linked SQL database:

SELECT MyTableUID, MyField

FROM [dbo].[MyTable] AS MT

INNER JOIN CHANGETABLE(CHANGES [dbo].[MyTable], @{activity('LookupLastChangeTrackingVersionActivity').output.firstRow.SYS_CHANGE_VERSION}) AS CT

ON MT.MyTableUID = CT.MyTableUID

WHERE CT.SYS_CHANGE_VERSION <= @{activity('LookupCurrentChangeTrackingVersionActivity').output.firstRow.CurrentChangeTrackingVersion}

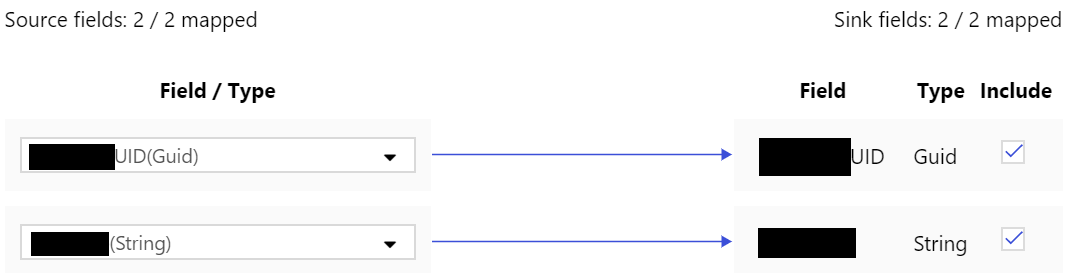

These field values were then mapped across to another SQL database, with only a partial selection of fields from the MyTable table occurring:

We checked the most obvious things first before almost giving up in despair:

- Regenerated the mappings from scratch, in case the definitions were out of date.

- Double checked the underlying field types and verified they were of the same type/length.

- Recreated the data source and linked service from scratch - again, no dice.

At this juncture, I ended up throwing in the towel and logging a support ticket with Microsoft, assuming it was a problem with ADF. But my very knowledgeable colleague Shuky Lee had other ideas. After doing some additional tinkering, she was able to get the pipeline working successfully by modifying the SQL query as follows:

SELECT MT.MyTableUID, MT.MyField

FROM [dbo].[MyTable] AS MT

INNER JOIN CHANGETABLE(CHANGES [dbo].[MyTable], @{activity('LookupLastChangeTrackingVersionActivity').output.firstRow.SYS_CHANGE_VERSION}) AS CT

ON MT.MyTableUID = CT.MyTableUID

WHERE CT.SYS_CHANGE_VERSION <= @{activity('LookupCurrentChangeTrackingVersionActivity').output.firstRow.CurrentChangeTrackingVersion}

Eagle-eyed SQL developers may have already spotted the subtle change, but to explain, it appears as if the query was unable to successfully determine whether to select the columns from MyTable (aliased as MT) or the Change Tracking version of the same table (aliased as CT). By fixing this error and ensuring that the MyTableUID and MyField are prefixed with the MT aliased value, the query can now correctly determine which table to “grab” the data from, and the pipeline completes successfully. I think me and Shuky were relieved that we were able to resolve this issue and get this piece of functionality working as intended. And, in a way, I was glad that it was an issue with my query as opposed to ADF itself, as that goes some way towards confirming my earlier comments about how stable and mature the product is 🙂